An early embedded toolchain – different stations for different tasks

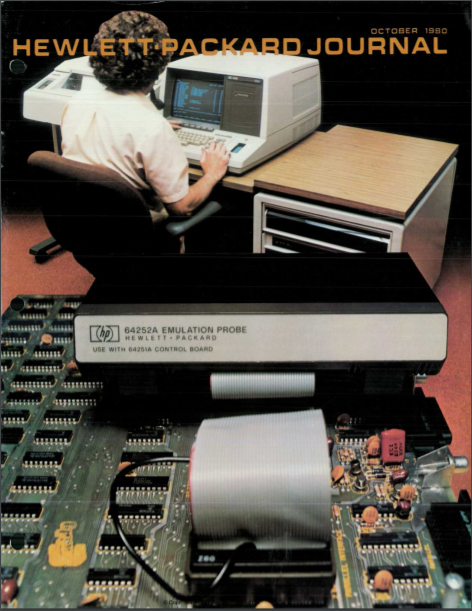

Many years ago I created embedded software for systems that measured e.g. the speed of nerve signals in the human body. Our embedded toolchain was rooted in a Unix system, with the famous “vi” editor and various command-line compilers. Whenever we needed to debug, we had to reserve the departments scarce and expensive resource – the HP64000 Emulators with long fat cables into the hardware we were developing (see picture below). A very important part of our toolchain was the Unix “make” system. There were no unit-tests.

We ran Pascal on our intel 80×86 and I created Unix C-code to simulate my bit-slice signal processing. Even the native C-code on the Unix system was basically debugged using “printf” statements. I don’t think any of us got to the point where we learned how to use the “core-dump” that the system sometimes threw at us.

The Visual Studio Years

Years later I wrote PC-code – first with Borland IDEs – later with Visual Studio. Compared to my Unix days, the turnaround time from writing code to testing it had gone drastically down, mostly because I could do it in the same tool, sitting at the same desk. For years I cherished this environment with its fast compile and built-in debugger.

There were frustrations when my system behaved differently from that of my colleagues, and I had to go through tons of menus to find the reason. Especially annoying was the lack of readable textual makefiles, that once had allowed us to construct a deterministic build order.

At this time Microsoft related to other tools with the motto “Embrace and extend”. It seemed however that it was “embrace and extinct”. Any functionality came wrapped into Visual Studio – no separation possible.

The modern Heterogeneous Systems

Now we have the fantastic Linux operating system and all the open source tools. This is so dominating today, that even if you do not use Linux, but e.g. a small real-time kernel, you still look to the Linux world for tools and ways of working.

Emulators are often not possible. Typically we perform a “remote debug” where we sit on our PC and single-step or break in the target. Alternatively the target is big enough to host a decent debugger.

With Linux’ roots to Unix, it’s no wonder that some of the old concepts are coming out of the closets. Textual configuration files – like that of the make system – help us create deterministic systems where you can compile the same code on different machines on different dates and still get the same binary result (If you do “native code” Windows Updates and its likes may inhibit this. To get around this, we may need to share a common virtual machine.)

This again allows us to do CI – Continuous Integration and DevOps. See The Phoenix Project and Completing the circle with DevOps?

What I really don’t understand is why “dual-mode” editors like “vi” and “emacs” are popular again. Personally I spend so much time in Word, Excel and other tools that I like the smaller gap from these tools to editors like e.g. Notepad++. For longer sessions, I prefer an IDE that allows me to debug like e.g. Visual Studio and Eclipse – and gives me “intellisense” when I write code.

What do we need today?

I want all the abilities of an IDE, without letting go of the ability to do it all on command-line, so that I can automate it and put it on a build-server.

It gets even better if my IDE can handle various 3’rd party compilers. This could be Python (theoretically not a compiler, but anyway) for my PC, or C for an obscure microprocessor. This allows me to code for my custom embedded Linux system, but also to compile it for – and test it on – PC Linux – possibly in a virtual machine. Or maybe a Raspberry PI. This all makes it possible to develop software in parallel with the hardware.

Eclipse has been open for 3’rd party compilers for years, and nowadays Microsoft has joined the bandwagon with its Visual Studio Code which is free to use, like Eclipse. The idea that Microsoft has done this is awesome, and actually – so is the implementation. It even works on Linux and Mac as well as on Windows. With Visual Studio Code, Microsoft is not directly fighting open source, but this time basing its many integrated solutions on code created by volunteers (downside is that this code cannot be used in other open source projects – not so popular in the open source community).

Today these IDEs integrate with a wealth of compilers, but also with CMake – the modern equivalent of make.

In the beginning of this page I stated that we did not have unit tests in the old days. That’s a “nogo” today. Many of the advanced unit-test tools integrate into e.g. Eclipse and Visual Studio Code.

See https://www.youtube.com/watch?v=Lp1ifh9TuFI for a very nice introduction of Visual Studio Code with CMake and gtest.

Another tool that you should consider to integrate into your automated command-line execution, is a static code analyzer. It’s nice if this also integrates into your IDE, but more important that it runs each night, or maybe whenever you checkin or similar.

I haven’t discussed version control here, but these days “git is it”. There is more about this in my embedded book – see Embedded Software for the IoT.

I consider tools for e.g. requirements management to be outside the embedded toolchain.